Are you planning a bathroom remodel in Glenview, IL, but feeling overwhelmed by the multitude of options available? As you embark on this exciting journey to transform your bathroom into a luxurious sanctuary,…

Read more

Colombia, país bendecido con una riqueza hídrica inigualable, enfrenta el desafío constante de gestionar y preservar sus recursos de aguas superficiales en Colombia. Estos recursos, vitales para el sustento de la biodiversidad, la…

Read more

Maintaining a healthy credit score is crucial for financial stability and access to opportunities such as loans, mortgages, and favorable interest rates. However, navigating the complex world of credit repair in Texas can…

Read more

In the realm of outdoor home improvement, fence staining stands out as a savvy investment that extends far beyond mere aesthetics. While enhancing the visual appeal of your property is undoubtedly a benefit,…

Read more

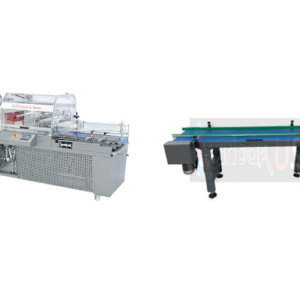

In today’s fast-paced industrial landscape, efficiency and productivity are paramount. To meet the demands of modern manufacturing, businesses rely on innovative technologies to streamline their production processes. Two such technologies that play a…

Read more

Personal trainers are experienced professionals who specialise in designing customised workout programs tailored to your unique needs and goals. With their expertise, support, and accountability, you can unlock your strength potential and transform…

Read more

Introducción: En un mundo en constante evolución, la planificación urbana se ha convertido en una tarea cada vez más compleja y vital para el desarrollo sostenible de nuestras ciudades y comunidades. En el…

Read more

In the world of personal and business finance, maintaining a healthy credit report is paramount. A strong credit report opens doors to favorable interest rates, loan approvals, and financial opportunities. However, if your…

Read more

Investing in a quality table leaf bag is paramount when preserving the beauty and functionality of your dining table. Whether you own a cherished heirloom or a modern dining set, proper storage is…

Read more

In the realm of interior design and home improvement, finding the perfect balance between aesthetics and functionality is key. One transformative solution that has been gaining popularity is epoxy floor coating. This versatile…

Read more